A parser is used to translate wikitext to HTML for viewing. Since there are a bunch of parser projects for MediaWiki’s markup, I’ll go benchmark some of them to see how fast they run.

Parsers

| Parser | Language | Description |

|---|---|---|

| MediaWiki 1.18.0 | PHP | Parser from the production MediaWiki, templates disabled. |

| PHP5 Wiki Parser | PHP | A series of regular expression matches to replace various elements of wikitext. |

| xWiki renderer | Java | Uses JavaCC parser generator. Used in xWiki |

| MyLyn WikiText | Java | Used in MyLyn |

| Sweble | Java | JFlex-generated lexer and Rats!-generated parser |

| Bliki 3.0.16 | Java | |

| Kiwi | C | Uses leg-generated parser. Used on aboutus.org. |

| flexbisonparse | C | flex-generated lexer and bison-generated parser |

I also tried Wiky (Ruby), WikiModel 2.0.6 (Java), and libmwparser. These crashed on some of the test documents…

Although this was intended to be a comparison between programming/scripting languages, the data isn’t really valid for this purpose. The algorithms between parsers, the subset of the language syntax it supports, and the correctness of the output varies between the parsers. Draw your own conclusions…

Test documents

I just chose a bunch of mostly-random documents (using Special:Random) that exercised various features of the language (short/long documents, tables, images).

- Agasanahalli (Dharwad) (1,587 bytes, a short article)

- Predicted outcome value theory (11,331 bytes, mainly text)

- Eurosong ’06 (12,186 bytes, lots of tables)

- Automobile (49,471 bytes)

- Mediawiki (80,149 bytes)

- List of tallest buildings in New York City (81,648 bytes, more tables with images)

- Nuclear program of Iran (292,201 bytes, a long article)

- The Young and the Restless minor characters (386,012 bytes, a long article)

Results

It’s not surprising that MediaWiki’s parser is the slowest of the bunch. It’s written in a scripting language (PHP), is the most feature-complete, and doesn’t use fancy parsing algorithms. PHP5 Wiki Parser is probably faster because it processes only a small subset of the syntax. As far as I know, a few of the others are in production use: xWiki (parser in xWiki), WikiText (MyLyn), and Kiwi (parser used on aboutus.org). Flexbisonparse stands out as being particularly fast (113x!), and it would be interesting to see whether it can robustly support a sufficient subset of the MediaWiki syntax in production without giving up all its speed. Flex and Bison are both around 25 years old, yet they’re both still alive and well.

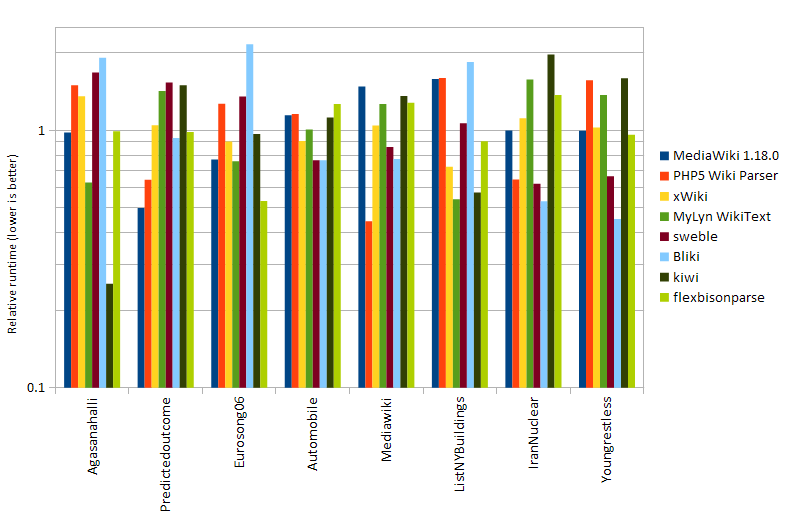

Here are some normalized runtimes broken down by document. The objective is to show whether certain parsers have particular strengths for particular document types. The data are normalized to the parsers’ geomean runtime so the geomean for each parser is 1. The data are also normalized by document so that the geomean for each document is also 1. The relative runtimes appear quite random: none of the parsers seem to scale particularly well or poorly with document length.

Hi Henry,

Thank you for using my project in the above tests. You’re correct that a lot

of the syntax formatting is incomplete especially tables. I’ve found it hard

to find any agreed standardisation on wiki syntax.

I’ve done more work around the PMWIKI format (Mainly because it seems more

formally documented) so that format is further forward than MediaWiki.

I’ll be interested in your work comparing formats especially your tests in

comparison with MediaWiki. Would you release the scripts you used to do the

comparison? Would you be happy for me to use, modify and redistribute on my

site?

Regards,

Dan

Hi Dan, nice to hear from you.

Yeah, the impression from what I’ve been reading is that MediaWiki syntax isn’t really formal or well-defined. I didn’t worry too much about correctness though, as I was just aiming for a performance comparison between programming languages on solving roughly the same problem…

I just wrote a wrapper around each parser…no fancy scripts. I’d be glad to send you what I have. Were you interested in MediaWiki, or the rest of them too?

MediaWiki: I modified maintenance/compareParsers.php into timeParsers.php, and edited some of the core code to disable database queries for templates. A diff for Mediawiki 1.18.0 is here: http://blog.stuffedcow.net/wp-content/uploads/2012/01/mw_timeparser.diff_.txt